Well, 2016 has begun, so I thought I’d take a look back at a year with Spectrum Scale. Actually, at the start of 2015, Spectrum Scale wasn’t even a term we were using – GPFS or Elastic Storage were the names we all had in our heads. I’ve heard various rumours about if Elastic Storage was ever meant to be used generally, if it was for the ESS server only, or the code name for the rebranding we eventually came to know as part of the Spectrum portfolio of products. Still, back at the start of 2015 things were different, the System X and GSS Lenovo divestiture was only just completing in the UK and protocol support for non native GPFS access was just an idea on a roadmap (unless you bought SONAS).

Shuffling back a few months in 2014, IBM had just published a white paper on using Object on GPFS, it wasn’t supported back then but there were guidelines on how to do it. In fact, it wasn’t until the May 2015 release of 4.1.1 that non-native GPFS protocol access was with us. This actually delayed one of my projects by a couple of weeks – we were about to go into pilot with a research data store built on Spectrum Scale when IBM announced at the York May user group that protocol support was imminent. In fact, I did a talk at the user group on our storage solution, it was interesting to hear about async-DR there, but I still think our full HA active-active solutions across two data centres is the right option for is and just works! Though the cluster export services and SMB protocol support are a massive improvement on our deployment based on Sernet Samba. It did take until the 4.2 release to get all the bugs ironed out in the CES scripts as we aren’t pure AD or pure LDAP so needed to use custom authentication modes.

Protocol support isn’t just SMB support of course, NFS and object are also provided. cNFS has been around for a while, but protocol support uses NFS Ganesha server. One reason IBM have moved to this is that it allows them some control over support for the NFS server stack – for example using the kernel stack may require your Enterprise Linux vendor to provide an updated kernel. They also work closely on the Ganesha project to get their fixes accepted upstream.

2015 also saw the first steps into demonstrating the move to six monthly major release cycles which has seen 2015 close with 4.2 hitting my storage clusters. We’re also seeing about 6 weekly PTF releases, though IBM have also kept with their excellent support approach of providing interim EFIX builds for customers until a fully tested PTF release is out. This is great though as we get really timely fixes for the issues we are seeing (it also means IBM get to test the fixes out before they become generally available).

Within the IBM teams, there has been a move to agile development methodologies and scrum teams. This has meant a move away from traditional road-map talks at the user group meetings, and more towards this is what we’re working on. There’s also been a lot of work under the hood on getting the first and second line support structures working together for Spectrum Scale. We have slides from the SC15 meeting on these if you are interested.

I’m aware of a few cloud deployments in the UK sitting on top of Spectrum Scale storage, its been an interesting ride for us! We see some great performance at times – 15 seconds to provision a VM of any size disk is pretty neat (it uses mmclone under the hood and copy on write), but equally we see performance issues with the small block updates from the VM disk image. One feature that you may have missed that arrived in a PTF release is HAWC – highly available write cache. This is basically a write coalescing mechanism the allows GPFS to buffer small writes via a (flash) layer which potentially should help with the small write issues for VM disk image storage. My testing stumbled a few times with this – the first “release” didn’t quite work as the NSD device creation had overly strict checks – it should be possible to run HAWC on client systems. I also dead-locked my file-system testing it out as one of my clients could resolve the NSD servers, but not all the clients in the cluster… I’ve also had a little instability when shutting down a number of my clients as all the HAWC devices become unavailable. 2016 will see further testing of this from my projects!

Cloud still has a number of unanswered questions, partly around security and access. A requirements document has gone to IBM on this, we’ll see how this progresses in 2016!

4.1.1 brought in the Spectrum Scale installer. I must say I’ve not used this in anger as it doesn’t really work well our work-flows, but I was able to unpick what it was doing fairly easily to get my protocol servers installed properly. The initial docs on manual installation were sparse, but this has been improved following er, user feedback ;-). As well as the installer and protocol support, there is also a new performance metrics tool which can collect data from the protocol support parts as well as core GPFS functionality.

4.2 arrived in mid November, just after SC15 in Austin, bringing a host of new features. Perhaps this biggest of these was the GUI. This allows monitoring and basic control of GPFS clusters including ILM and protocol features. IBM spent a lot of time demoing the GUI pre-release and listening to user feedback, so hopefully we’ll see some of that feedback going into improving the GUI. A GUI installer has been added as well, though I’m a little unsure about really who this is targeted at. Compression for the file-system is now available as a policy driven process with un-compression happening on file access. Basic QOS features for maintenance procedures is now available for example to limit impact from a restripefs. This isn’t something I’ve been able to test yet as it requires the file-system and metadata to be fully upgraded to 4.2 version which you can’t do if you have older versions mounting the file-system…. I nearly have all my clusters up to 4.2 though now!

4.2 also had updates for the object interface, and now provides unified file and object, i.e. you can do POSIX style access to a file as well as access via object methods. Finally, some updates to the hadoop interface have been added with an updated connector.

We’ve also seen the strengthening of the UK development team, led by Ross who has been great at working with the User Group, kicking off the meet the devs meetings here and getting members of his team along to our events. Meet the devs has been on UK tour this year, getting to London, Manchester, Warwick and Edinburgh (and we’re heading to Oxford in February 2016). The UK team is working on the installer and all things related to installing as well as working on some of the 2016 major projects (problem determination being one of them). I know the UK team has been talking to UK customers and members of the group and taking in feedback to help drive development. I also met the product exec who was in the UK for a few days (and again in Austin) to talk user group issues.

And finally, away from pure IBM work on Spectrum Scale, we’ve seen the release of Seagate’s ClusterStor appliance running Spectrum Scale, DDN have the SFA14k storage, ESS and GSS have been updated as well. I’ve also had chats with other vendors looking at appliance approaches, so it will be interesting to see what 2016 brings!

The IBM CleverSafe acquisition will be an interesting one to watch for 2016 and how IBM go about integrating it with Spectrum Scale …

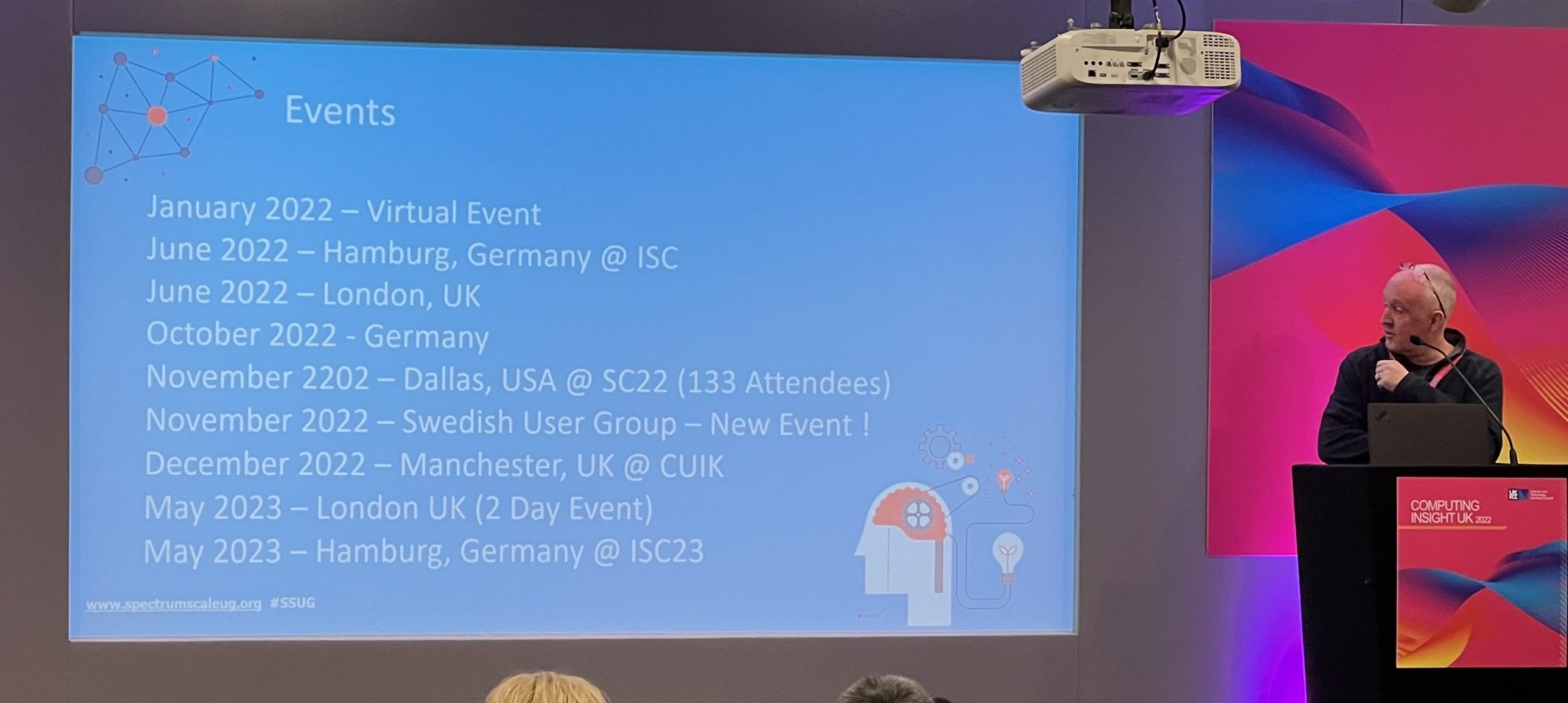

The user group has been busy this year and I can’t believe how busy its been since I took over from Jez as chair in June! We’ve seen the launch of a US chapter with a meet the devs meeting in New York and a half day session at SC15 in Austin. The US group is being taken forward by Kristy and Bob with help from Doug and Pavali at IBM – thanks guys! The Austin meeting was a great success and very well attended. We also closed out the year in the UK with a successful user group at Computing Insight UK in Coventry.

Plans for 2016 are in flow, with the UK user group meeting booked for May and the first meet the devs planned for February.

And really finally, thanks to the user community – we wouldn’t be able to do meetings and events without you attendance and talks, and also to IBM, Akhtar and Doris, the marketing people and all the developers, researchers and managers who get the developers along to the meetings.